Inside Our Fallible Brains

How to outsmart illogical thinking

I’ve always prized rationality over emotionally based thought. I like facts. Proofs. Solid arguments to back a theory.

I’m not the type to fall for marketing gimmicks or get hooked by the emotional tugs in advertisements. I take the long view. I make well-informed decisions when I shop, not emotional choices — or so I smugly asserted to myself.

Then I read Daniel Kahaneman’s best-seller, Thinking, Fast and Slow, and my identity, as I knew it, was delivered a black eye. Turns out, I’m not as rational as I thought.

But no one else is, either. Kahaneman’s book details many (so, so many) logical fallacies that people instinctively and unconsciously stumble into.

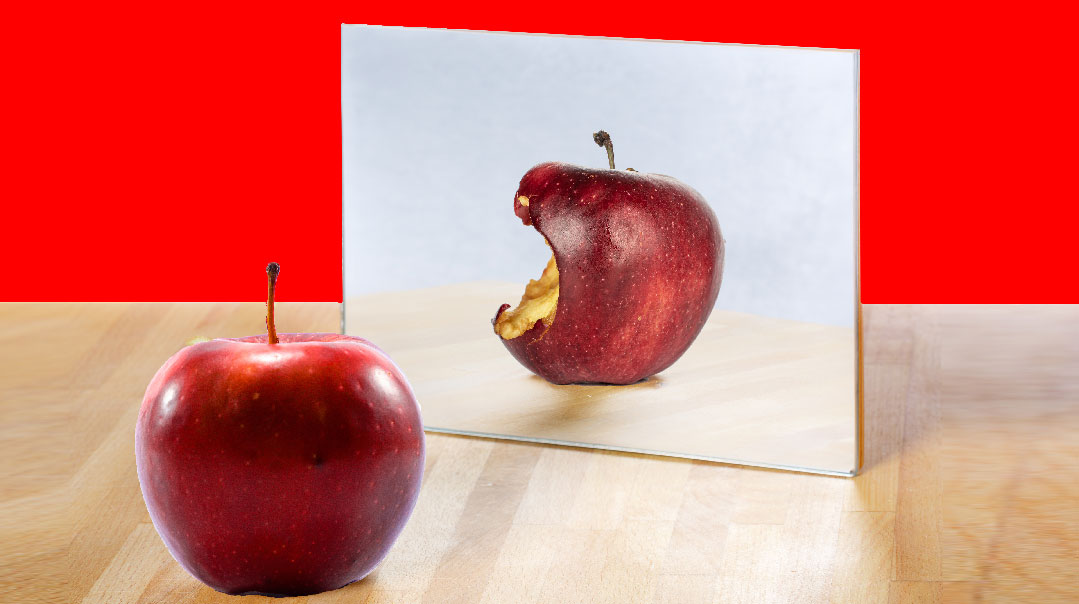

I’d always imagined that the heart (emotions) and the brain (rational practicality) were separate entities in the human psyche. The two are usually depicted in constant struggle — how often have we heard, “My heart is saying ‘yes’ while my mind says ‘no’ ”?

But analytics and sentiment are not neatly separated by bodily organs; they’re actually intermingled, to the point that when we think we’re engaging in rational thought, we’re unwittingly acting emotionally.

But wait, there’s hope! If we learn how the mind actually works, we can teach ourselves to actively outsmart our illogical thinking.

A Model of the Mind

Kahaneman — a psychologist who won the Nobel Prize in Economics for his discoveries about behavioral economics — breaks down the human thought process into two primary categories, labeled as System 1 and System 2 (based on the Keith Stanovich model of the mind). These Systems run on “heuristics,” simple processes that help us make sufficient, if imperfect, conclusions when posed hard questions.

System 1 is fast and involuntary, and home to reflexive reaction (see cake, want cake, eat cake). System 2 is slow and requires effort and is the source of self-control (walk away from cake and make do with carrot sticks).

Since System 2 requires more exertion, it can get tired (and you might get your cake). As David Ropeik (the author of How Risky Is It, Really?) wrote in the New York Times, “the basic architecture of the brain ensures that we feel first and think second.”

The “WYSIATI” Factor

Aside from being a little lazy, System 1 also tends to jump to conclusions. Kahaneman uses the clumsy acronym WYSIATI, which stands for “What you see is all there is.” System 1 needs a coherent story to work with, so when in operation, our minds leap to basic assumptions with the limited information available.

Our brain doesn’t like being in the state of unknown. That’s why System 1 frantically comes up with a story to make sense of it, and therefore, we come up with all sorts of erroneous narratives. Brené Brown provided a personal example of this in her book Rising Strong. She and her husband were out on a date, and twice she attempted meaningful conversation. Twice he didn’t respond. The story she created in her mind was that he wanted a divorce. She called him on it instead of stewing, and it turned out he was preoccupied with another matter, fabricating a story of his own.

We’d like to think that System 2 — which raises doubts and analyzes their legitimacy — will prevent the potentially faulty conclusions of System 1, but System 2 doesn’t have much work ethic. That’s why it’s so easy to judge others unfavorably — our default programming is designed to.

First Impressions

Because System 1 operates on WYSIATI, it’s especially prone to the “primacy effect,” which in essence means (as my mother always said) that first impressions really matter (and why she was a great believer in full-time makeup). Whatever information we’re exposed to first will color our opinion either for the positive or negative, and pretty much stay that way.

This connects to the “halo effect”; if the initial impression of someone is positive, then one will assume they’re a wonderful human being, through and through, even if more “human” qualities are discovered later. The reverse is also true — the “horns effect.” Despite new details coming to light, we tend to stubbornly cling to our original biases.

Feelings Outweighing Facts

The “affect heuristic” is when we let our current feelings overwhelm the facts. This is most obvious with fear. Do I logically know there is no such thing as the boogie man? Yes. But I’m still not fond of the dark. Okay, I’m close to petrified. I still cover my toes at night with a blankie so the monsters don’t get me.

We’d think that the weight we give to risks would equate with statistics, but… no. Take the fear of kidnapping, despite the fact that the rate of child abductions by strangers are very, very low. The National Center for Missing and Exploited Children received 25,000 reports of missing children in 2018; only 77 were abductions by non-family members.

In previous generations, and even today in countries other than the US, babies were or are left outdoors in their carriages, unsupervised, for regular airings; now, we are gripped with a terror of youngsters being snatched the moment our backs are turned. One extreme story and all children are put on lockdown, while there are much, much greater dangers with higher rates of damage.

A family friend, a psychologist my parents would often host when he was in town, would say there is no point in asking for shidduch information, as the responses can be unfairly prejudiced. He’d illustrate: One morning, Mr. Gross, while putting out the garbage, sees his neighbor, Mr. Klein, getting into his car, and bellows a cheerful “Good morning!” Mr. Klein doesn’t reply. Mr. Gross, feeling a tad stupid, walks back into his house.

When the phone rings moments later, inquiring about Mr. Klein’s son, chances are that Mr. Gross will be less than effusive. However, he’s unaware that Mr. Klein slept badly the night before, or his mother is unwell, or he has questionable hearing. But WYSIATI, and Mr. Klein’s System 1 fell for it.

Another instance of illogical reasoning is when System 2 takes our current emotional state and uses that to answer a question regarding the long-term, or an inquiry that has nothing to do with emotion. Say I slept badly one night, and someone asks me the following morning to do them a favor next week (more specifically, I’m asked by this publication to have a piece in by a certain deadline). I’m so out of my mind with exhaustion at the moment that I can’t believe one good night’s rest will have me bright-eyed by tomorrow; I willingly refuse to commit because I’m tired now (as the editor of this publication can confirm. Ahem).

False Predictions

“I knew that’s what was going to happen!” is a favorite utterance of many (along with “told you so.”). However, let’s be honest: We merely thought something might happen; we didn’t know it.

We base this on “narrative fallacy,” when we believe we thoroughly understand the past, and assume that we can therefore predict the future. Yet (a) we don’t understand the past as well as we think we do and (b) it doesn’t follow that we can therefore foretell what will be. Yes, there were times when we thought something might happen, and it did. But there were times when it didn’t. We just kept quiet then.

Kahaneman is clearly unfamiliar with the concept of Hashgachah pratis. He uses the word “luck” a lot, especially in the case of good outcomes supposedly resulting from human decision making. There are tomes dedicated to successful businesspeople, granting credit to specific choices and methods. However, Kahaneman claims, such feats are less about brilliant decisions and more about “luck” — right place, right time, etc. In hindsight, these moves were vividly obvious, but that’s only because they worked out.

All these fallacies contribute to a fantasy of control, the opposite of “luck.” We like to think it was all due to the leadership style of the CEO, who gets the credit or the debit. Yet there are so many other factors that contribute to a company’s achievements beyond one person’s choices. In the end, there are few guarantees for success. Hashgachah pratis determines success: After all, Rav Avraham ibn Ezra couldn’t make a living no matter how hard he tried, to the point that he said, “If I sold candles, the sun would stop setting, and if I sold burial shrouds, people would stop dying.” Businesses take off or flop, often for reasons unknown.

It’s the same premise with so-called experts who make predictions. They really can’t, at least not accurately. Experts can only deal with so many elements at one time, and our world operates on myriads of dynamics. I don’t bother tuning in to preelection- poll analysis hysteria, and the proof was evident in the last presidential race: No one saw that happening.

Compelled to Compare

Dan Ariely’s Predictably Irrational is another book that will make you question every decision. Not only are we irrational, he states, the irrationality is systemic and therefore, predictable (hence the title).

His examples deal more with everyday shopping. He begins by explaining that we don’t really know the actual value of an item; we base its worth on comparison. Ergo, if I’m dithering between three different Dijon mustards in the supermarket, chances are I’ll go with the middle-priced one (in my case, I concluded that the priciest one is unnecessarily so, while the cheapest contains mediocre ingredients). Yet is there any established truth to the basis of my decision? Nope.

We rely so much on comparisons, Ariely says, that until a product has a competitor, we won’t even give it a second glance. He even references the Commandment, “Do not covet,” explaining that we only experience envy when we compare what we already own to another’s possessions.

It’s due to our comparison programming that we can become subject to the “keeping up with the Joneses” mentality. It’s an expensive, exhausting, endless game of escalation that easily gets out of hand — providing we allow ourselves to be subject to our predetermined settings.

This concept of relativity shows how individual viewpoints are shaped. I considered this in terms of the area I live in. When I first moved in, I was smitten. In my observations, the outlook and friendliness of my new locale was ideal as opposed to the less accepting mindset of my previous community. Yet after conversing with those who originated from communities with even more casual and welcoming attitudes, I discovered that my paradise was mediocre in their eyes.

When relativity applies to purchases, my go-to example is from Kahaneman: One needs a microwave. The nearby store has one for $150, while another store across town is selling it for $100. Many of us would make the journey for the $50 savings, right?

However, let’s say one is in the market for something pricier, like a living room set. The nearby option is $2,050; the one across town is $2,000. Would you make the trek to save the money? Most wouldn’t. The difference between $100 and $150 is more glaring than $50 relative to $2,000. But it’s still the same money saved or spent.

Anchors Away

The phenomenon of “anchoring” drives further comparisons. The first time we see something associated with something else, there it stays. To illustrate: when I was three, I enjoyed my first milkshake, then promptly threw up. I never had a milkshake again. The anchor had dropped firmly into place.

Anchoring applies to prices, which leads to “arbitrary coherence.” Although price tags are randomly assigned, our first exposure to a value assigned to a product is an “anchor,” and we consider that the basis for comparison. My father, for instance, often says how when he was growing up in in the ’50s, a bread roll cost a nickel. Considering how he’s an accountant, he’s certainly familiar with the concept of inflation, so I always wondered at this repeated reference — until I read about anchoring.

Arbitrary coherence explains why two people can have vastly different viewpoints on what is considered a reasonable price for an item. It’s not necessarily a matter of one being “cheap” and the other a “spendthrift.” It depends on their anchor.

The Perils of Free

Then there’s that dangerous word: “Free.” Oh, what we do for “free!” Ariely describes “free” as an “emotional hot button.”

Even if the free item doesn’t suit our purposes, and will likely end up being a millstone around our necks, we take two. Because when an item is free, there’s no risk of financial loss, so no downside! Except that we now have useless junk that clutters up our lives.

Ariely further unpacks the concept of “free” concerning social norms and market norms. The social norms in terms of “free” are when we do favors for others, like chesed. However, in terms of market norms, “you get what you pay for.”

Problems arise when the two separate concepts clash. Imagine: A fellow is informed that the lady he was courting has decided to call it off. One of his upset reactions may be, “But I spent so much on her!” He unchivalrously applied market norms on an accepted social norm: Obviously, a woman isn’t obligated to enter a lifelong relationship in return for dinner, which was merely the means to see if they were compatible.

When market norms are harnessed to social favors, the cheerful satisfaction we gain for doing something nice is deflated when price enters the picture. If ever offered money for babysitting my siblings’ children, I was hurt; I didn’t want to be paid for spending time with my nephews and nieces! Then I’d be insulted that the fee suggested was vastly lower than my usual hourly rate.

The passion we bring to a project is greater if it’s executed for a cause, rather than a salary. Charities flourish when volunteers bring their all, which they don’t do when there’s a paycheck. It sounds counterintuitive, but that’s how we operate.

What we Expect

“Tracht gut vet zein gut — Think good and it will be good,” the Yiddish saying goes, and it’s true; our expectations do influence our perception of events. The Meraglim were determined to only see the bad, and returned proclaiming the worst news possible.

Studies have shown that even when something nasty was added to a beverage, the drinkers didn’t think it tasted off unless they were told so before. We’re swayed by pretty presentation, even if the food is less than gourmet (think greasy takeout); a celebrated cellist will be ignored while performing in a grimy subway station.

The reverse is also true: Our perceptions influence our expectations. You sometimes hear about people who are bitterly disappointed when the house they love and have lived in for years sells for a pittance of their asking price. Those false expectations can be attributed to the “endowment effect,” which means that we value our own possessions more than others do.

The more we invest into something, the more ownership we feel. We assume others see what we see, which are all positives, whereas their view is less rose-tinted. That’s why downgrading is so difficult, or even changing our minds about an ideology, like a political party or sports team. My brothers would never switch their loyalty from the Yankees, even if the Mets magically morphed into the best team in the US.

Thinking, Fast and Slow is approximately 500 pages, and Predictably Irrational is 380, so I’ve only touched the tip of the fallacy surface here. The disheartening takeaway I had from both books was that a truly logical mind that functions with no input from emotions is extremely rare. Yet with awareness, we can train ourselves to see our thoughts and subsequent choices through a mindful lens, rather than operating on our faulty default settings.

(Originally featured in Family First, Issue 664)

Oops! We could not locate your form.